Over the years, we have seen paradigm shifts transforming the way interpreting services are delivered to end users. In the early 1980s, on-demand over-the-phone interpretation emerged as a powerful challenger to onsite consecutive interpretation, particularly in the healthcare industry. In the early 2000s, video remote interpretation greatly improved the experience for users who needed to connect to an interpreter instantaneously via video call.

Only recently, we experienced another major change as far as interpreting is concerned: the introduction of remote simultaneous interpretation, or RSI. Ideal for real-time interpreting in hybrid and virtual meetings, RSI has become ubiquitous in just a few years. Today, RSI is also a great and cost-saving option for in-person events, as interpreters do not need to be flown in and accommodated, and there is no need for soundproof booths.

Note: Since publishing this blog post, we have updated the name of our AI-powered solution to "AI Speech Translation".

AI Driving Innovation in Language Services

Now there seems to be another big shift. And it’s being driven by AI. Incredible research and developments in areas such as natural language processing and machine neural learning are not only bringing us ChatGPT and a host of other AI-powered tools. Advances in the use of machine learning algorithms are propelling the field of neural machine translation, and, as a next step, real-time AI speech translation.

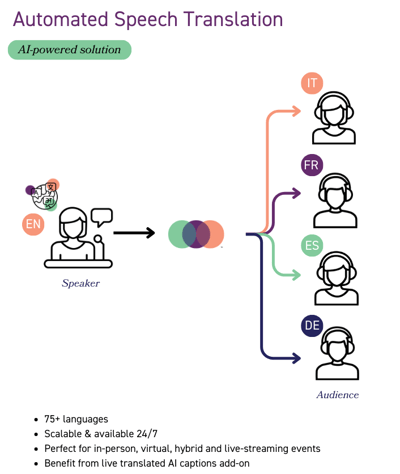

In a nutshell, automated speech translation works in 3 steps:

Step 1: A (human) presenter's speech or presentation at a live event is converted into a live captioned text using automatic speech recognition (ASR).

Step 1: A (human) presenter's speech or presentation at a live event is converted into a live captioned text using automatic speech recognition (ASR).

Step 2: This ASR-generated output text is immediately translated into another language using neural machine translation.

Step 3: The translated text is then synthesized into speech in the desired target language using voice synthesis technology, also called text-to-speech or TTS.

All this happens almost instantaneously, with a delay of just a few seconds.

Automated Speech Translation: Too Good to Be True?

Does AI speech translation technology sound too good to be true? There is a growing consensus that neural machine translation is getting better and more accurate, and at an exponential rate.

Plug-and-play solutions make it easy for clients to set up automated speech translation for their event or meeting and send the audio to their intended audience on any device, in any location. Unlike most forms of human interpretation, AI speech translation does not require video or a direct view of the speakers; a simple audio feed is all that is needed for the machine to generate speech translation in numerous languages at the same time. The client can even select the accent or gender for the AI voice.

At Interprenet, we use Interprefy’s Aivia technology to power our automated speech translation solution. Interprefy is a leader in AI language translation technology and benchmarks the best AI engines for each language combination to ensure optimal output.

However, despite the benefits that speech-to-speech translation offers, the technology is not accurate enough to bring about a paradigm shift in the world of interpreting. In almost all industries, be it financial, legal, pharmaceutical, government, or educational, to name a few, interpreting is done by professional human interpreters because they can ensure a near-perfect level of accuracy. Interpreters are not only expected to interpret word for word but also to convey the intended meaning from one language to another accurately.

Machines do not have common sense and reasoning abilities

Compared to human intelligence, machines do not have common sense and reasoning abilities. To interpret as accurately as possible from one language to another, you need to understand the physical world well enough to grasp the context of the communication you are interpreting. Here’s an example: In the original “Mary Poppins” movie, Mary Poppins says: “I know a man with a wooden leg named Smith.” “What’s the name of his other leg?” Here we see the ambiguity of everyday language. We need to understand that the name “Smith” refers to the man and not the wooden leg. If we don’t understand this, it leads to confusion, as we see in the question. This understanding is the result of common sense and logical thinking.

If AI speech translation gets nine words out of ten right, but the one word that is mistranslated changes the entire meaning of the sentence, then the resulting translation is completely wrong. In other words, it is not 90% correct but 100% wrong from the listener’s perspective!

We must not forget that while human interpreters can make mistakes, they can also take corrective action to rectify their errors - a process that would likely be much more difficult with automated speech translation. Therefore, AI speech translation could be risky in scenarios where it is crucial to understand a speaker accurately, or where there is little room for error and no opportunity for self-correction.

When to Use Automated Speech Translation?

Automated Speech Translation is certainly not a substitute for human interpreting. However, it could be used in cases where interpretation is not an option, such as when there is no budget for an interpreter or when a human interpreter is simply not available.

Let's consider a few scenarios: Sometimes, there aren’t enough event attendees who need an interpreter to justify the cost of hiring one for the event planner. If many languages are spoken at an event, you could use human interpreters for some languages and AI speech translation for the languages for which there is less demand. Other times, conference participants may be bilingual but prefer to hear what’s being said in their native language. Occasionally, an interpreter is needed at the last minute to cover a language but is not available at short notice. In some of the cases mentioned, automated speech translation can offer users a degree of language support.

Ask yourself: When is it safe to leave the decision to AI?

Ultimately, we need to be clear about the goal of our project, event, or business activity. We need to ask ourselves under which circumstances it is safe to leave decisions to AI and when human judgment and intelligence are required. At Interprenet, we help companies in this decision-making process with respect to the use of language solutions.

We work with each client to understand their use case and whether it makes sense to incorporate AI-powered technology to facilitate language services – especially for live events!

Here are some questions we consider when advising a client about the use of Automated Speech Translation:

-

How complex and specialized is the topic that requires interpretation or multilingual live captioning? Will a lot of technical terms be used to get the message across?

-

What are your expectations in terms of linguistic accuracy? In other words, is this a high-profile event where the nuances and cultural subtleties of a language are of paramount importance?

-

Which target languages do you need? Are they rare languages or specific language pairs?

-

Do you need a scalable solution, as you will be using it frequently?

-

Is the original message delivered in a dynamic setting with lots of dialogue? Or is the original message a one-way presentation or speech delivered at a good pace by a speaker with no strong regional accent?

We will explore these and other questions with you when you request our language services for your live event and wonder whether automated speech translation could be an option. This way, we ensure you can choose the most suitable solution to get your message across in other languages to meet your unique objectives.